Web Scraping Tools- A Comprehensive Survey

Exploring and evaluating web scraping tools for efficient data extraction from various online sources

In today’s data-driven landscape, web scraping is essential for extracting valuable insights from online sources. This project was created as part of an exploration survey to evaluate various web scraping tools and their performance with different types of data. This process, involving software tools to gather data from websites, empowers organizations, researchers, and individuals for purposes like market research and content aggregation. The rising popularity of tools like BeautifulSoup, Scrapy, and Octoparse has made data extraction accessible and cost-effective.

The code for this project is available at sahilvora10/Survey-for-Web-Scrapers. If you have any questions or inquiries, please feel free to contact me at sahilvora2024@gmail.com

Project Objective

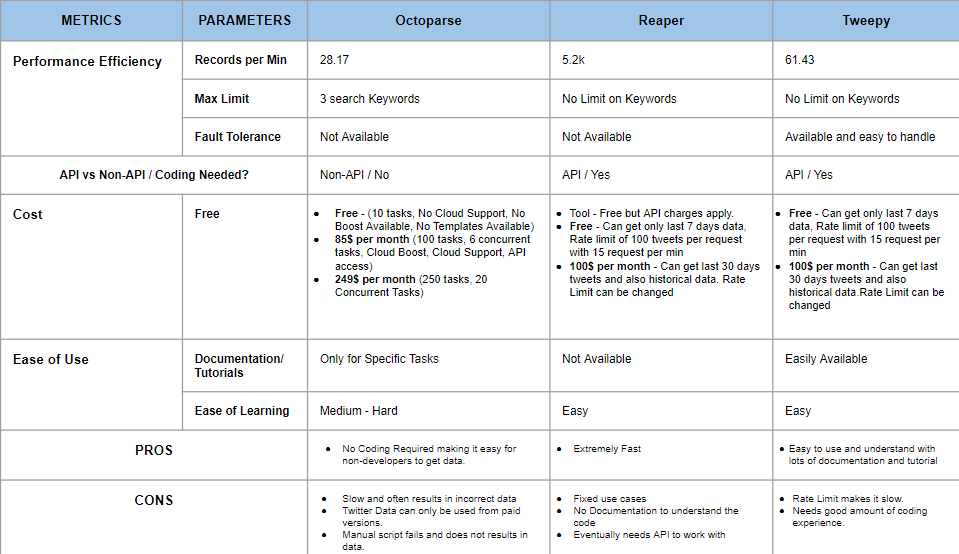

Evaluate and analyze diverse web scraping tools to streamline data collection from news, social media, and e-commerce websites. The assessment will consider factors like speed, accuracy, usability, and cost-effectiveness, providing valuable insights into choosing the most suitable tool for specific business requirements.

Dataset Overview

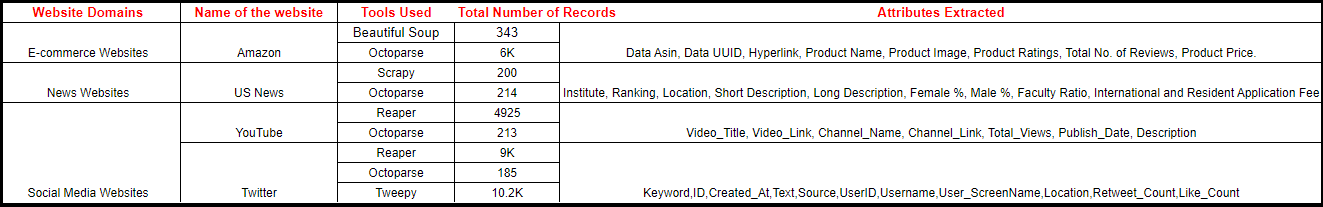

For this project we collected following data from different sources.

- Amazon: Product data collected for search results for the term "iPhone"

- US News: Data collected for best engineering universities in USA

- Youtube: Video data collected for video search results for the term "Data Science"

- Twitter: Tweets collected for hashtags #ChatGPT

Metrics for Evaluation

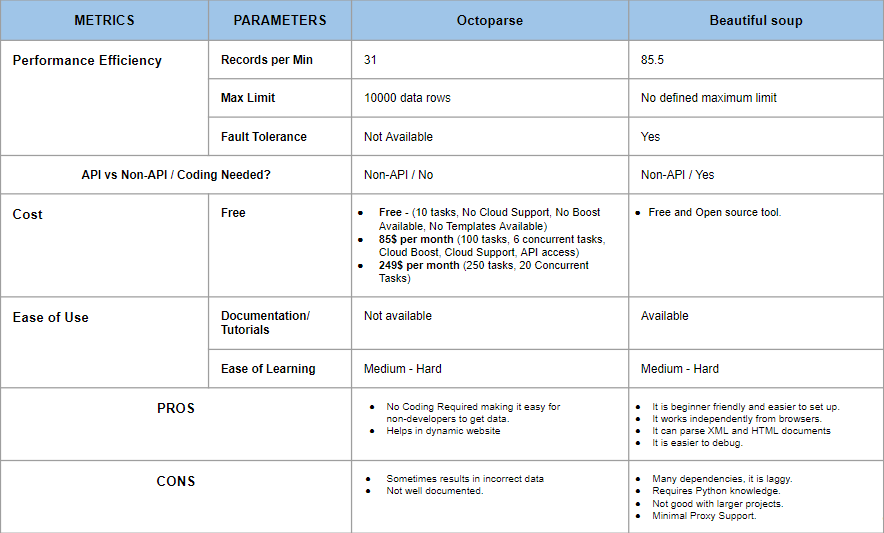

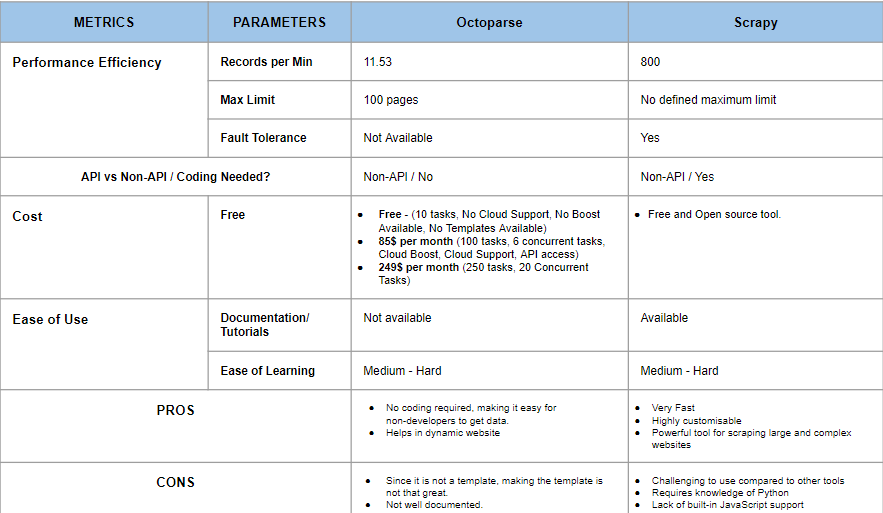

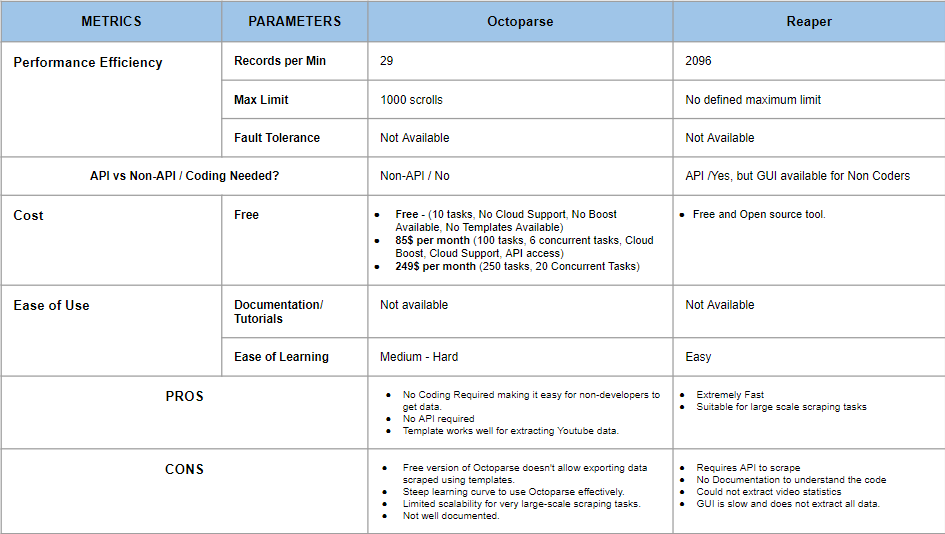

For this project we evaluated and formulated some metrics that would be helpful for the evaluation.

- Performance Efficiency

- Time taken to scrape the same set of data

- Max Limit

- Fault Tolerance

- Ease of Use

- Proper Documentation for libraries and tools

- Scraping procedure

- API vs Non-API

- Amount of Coding needed

- Availability of Non-API tools

- Cost to Scrape Data

- Is the tool free to use

- Charges involved per API call

- Upper limit on the amount of data

Results and Finding

Visualizations

Summary

Our study of web scraping technology shows its efficiency and flexibility, allowing automated data collection. While each tool has unique limitations, data from sites like Twitter, Youtube, US News, and Amazon offer valuable insights. Our detailed tool evaluation sets our project apart, empowering users with valuable insights into web scraping.